7 API Interface Function List¶

7.1 Get version information¶

UPvr_GetUnitySDKVersion¶

Function name: public static string UPvr_GetUnitySDKVersion()

Functions: Get SDK version number

Parameter: None

Return value: SDK version number

Method of calling: Pvr_UnitySDKAPI.System.UPvr_GetUnitySDKVersion()

7.2 Sensor tracking related¶

UPvr_StartSensor¶

Function name: public static int UPvr_StartSensor(int index)

Functions: Open sensor tracking

Parameter: 0

Return value: 0: Call success; 1: Call failure

Method of calling: Pvr_UnitySDKAPI. Sensor. UPvr_StartSensor(index)

UPvr_ResetSensor¶

Function name: public static int UPvr_ResetSensor (int index)

Functions: Reset sensor tracking, the direction is determined by the system, and the horizontal and vertical directions are reset by default

Parameter: 0

Return value: 0: Call success; 1: Call failure

Method of calling: Pvr_UnitySDKAPI. Sensor. UPvr_ResetSensor (index)

UPvr_OptionalResetSensor¶

Function name: public static int UPvr_OptionalResetSensor (int index, int resetRot, int resetPos)

Functions: Reset sensor orientation and position

Parameter: index: 0 resetRot: Orientation resetPos: Position 0: Not reset 1: Reset

Return value: 0: Call success; 1: Call failure

Method of calling: Pvr_UnitySDKAPI. Sensor. UPvr_OptionalResetSensor (0,1,1)

7.3 Controller related¶

Note:The API interface can be used normally only after the controller service is successfully started.

Post-successful events can be obtained through Pvr_ControllerManager.PvrServiceStartSuccessEvent

UPvr_GetControllerPower¶

Function name: public static int UPvr_GetControllerPower(int hand)

Functions: Get controller power

For G2 and G2 4K, please enter 0; for Neo 2 and Neo 3 ,0 represents the left controller and 1 represents the right controller)

Return value: 1 to 5

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetControllerPower(int hand)

UPvr_GetControllerPowerByPercent¶

Function name: public static int UPvr_GetControllerPowerByPercent(int hand)

Functions: Get controller power

For G2 and G2 4K, please enter 0; for Neo 2 and Neo 3 ,0 represents the left controller and 1 represents the right controller)

Return value: 1 to 100

Method of calling: Pvr_UnitySDKAPI. Controller .UPvr_GetControllerPowerByPercent(int hand)

changeMainControllerCallback¶

Function name: public static void changeMainControllerCallback (string index)

Functions: Callback of Master Hand Change

Parameter: 0/1 represents which the current master control controller is changed (the main control controller is a controller for enabling ray to participate in UI interaction, which has no specific relationship with the index number of the controller)

Return value: None

Method of calling: Pvr_ControllerManager.ChangeMainControllerCallBackEvent += XXXXX

UPvr_GetControllerAbility¶

Function name: public static int UPvr_GetControllerAbility(int hand)

Functions: Gets whether the current Neo 2 controller supports 3Dof or 6Dof.

Parameter: 0/1

Return value: -1 is call failure, 0/2 is 6Dof, and 1 is 3Dof

Method of calling: Pvr_UnitySDKAPI.Controller. UPvr_GetControllerAbility(int hand)

setControllerStateChanged¶

Function name: public static void setControllerStateChanged (string state)

Functions: Callback of change in connection status of Neo 2 and Neo 3 controller

Parameter: The format is (x,x). The first number is the serial number of the controller, and the second number is the connection status of the controller. For example, in 0,0, 0 means the controller 0 is disconnected, 1 means controller 0 is connected

Return value: None

Method of calling: Pvr_ControllerManager.SetControllerStateChangedEvent += XXXXX

UPvr_GetControllerState¶

Function name: public static ControllerState UPvr_GetControllerState(int hand)

Functions: Get the connection status of Controller

Parameter: For G2 and G2 4K, please enter 0; For Neo 2 and Neo 3, it corresponds to the corresponding controller 0/1.

Return value: Connection status (Note: For Pico Neo using one controller, it is recommended to call twice to determine the connection status because the index of the current controller is inconvenient to be determined)

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetControllerState(hand)

ResetController¶

Function name: public static void ResetController(int num)

Functions: Reset controller orientation(This interface is not available for Neo 2 and Neo 3)

Parameter: For G2 and G2 4K, please enter 0.

Return value: None

Method of calling: Pvr_ControllerManager.ResetController(num)

StartUpgrade¶

Function name: public static bool StartUpgrade ()

Functions: Start upgrading the controller

Parameter: None

Return value: true: true false: false

Method of calling: Pvr_ControllerManager.StartUpgrade()

GetBLEVersion¶

Function name: public static bool StartUpgrade ()

Functions: Get the BLE version

Parameter: None

Return value: BLE version(Not applicable to G2/G2 4K)

Method of calling: Pvr_ControllerManager.GetBLEVersion()

setupdateFailed¶

Function name: public static void setupdateFailed()

Functions: Callback of failed upgrading

Parameter: None

Return value: None

Method of calling: You can either add logic directly within this method or use delegate to call

setupdateSuccess¶

Function name: public static bool setupdateSuccess()

Functions: Callback of successful upgrading (Not applicable to G2/G2 4K)

Parameter: None

Return value: None

Method of calling: You can either add logic directly within this method or use delegate to call

setupdateProgress¶

Function name: public static void setupdateProgress (string progress)

Functions: Callback of upgrading schedule

Parameter: Schedule 0-100

Return value: None

Method of calling: You can either add logic directly within this method or use delegate to call

UPvr_GetControllerQUA¶

Function name: public static Quaternion UPvr_GetControllerQUA (int hand)

Functions: Get controller orientation

Parameter: For G2 and G2 4K, please enter 0; For Neo 2 and Neo 3, it corresponds to the corresponding controller 0/1.

Return value: Quaternion, controller orientation

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetControllerQUA(hand)

UPvr_GetControllerPOS¶

Function name: public static Vector3 UPvr_GetControllerPOS (int hand)

Functions: Get controller position

Parameter: For G2 and G2 4K, please enter 0; For Neo 2 and Neo 3, it corresponds to the corresponding controller 0/1.

Return value: 3 dimensional vector, controller position

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetControllerPOS(hand)

UPvr_GetMainHandNess¶

Function name: public static int UPvr_GetMainHandNess()

Functions: Get the current master control controller index

Parameter: None

Return value: 0/1 (Note: this is exclusive for Neo 2 and Neo 3 and the correct value can be returned only after the bind controller service is successful. Our SDK will bind the controller service when the application starts, and it is recommended that developers use the Bind callback function to judge whether the bind is successful)

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetMainHandNess()

UPvr_SetMainHandNess¶

Function name: public static void UPvr_SetMainHandNess(int hand)

Functions: Set the current master control controller

Parameter: 0/1

Return value: None

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_SetMainHandNess(hand)

UPvr_GetKey¶

Function name: public static bool UPvr_GetKey (int hand, Pvr_KeyCode key)

Functions: Judging whether the key is pressed or not

Parameter: 0/1, Pvr_KeyCode

Return value: true: Press false: Fail to press

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetKey (hand, key)

UPvr_GetKeyDown¶

Function name: public static bool UPvr_GetKeyDown (int hand, Pvr_KeyCode key)

Functions: Checking whether the key is pressed or not

Parameter: 0/1,Pvr_KeyCode

Return value: true: Press once false : Fail to press

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetKeyDown (hand , key)

UPvr_GetKeyUp¶

Function name: public static bool UPvr_GetKeyUp (int hand, Pvr_KeyCode key)

Functions: Checking whether the key is lifted or not

Parameter: 0/1, Pvr_KeyCode

Return value: true: Lift once false: Fail to lift

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetKeyUp (hand , key)

UPvr_GetKeyLongPressed¶

Function name: public static bool UPvr_GetKeyLongPressed (int hand, Pvr_KeyCode key)

Functions: Checking whether the key is pressed for a long time

Parameter: 0/1, Pvr_KeyCode

Return value: true: Long press the key for more than 0.5 seconds false: Fail to reach the long press time

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetKeyLongPressed (hand , key)

UPvr_GetKeyClick¶

Function name: public static bool UPvr_GetKeyLongPressed (int hand, Pvr_KeyCode key)

Functions: Checking whether the key is pressed as a short click

Parameter: 0/1, Pvr_KeyCode

Return value: true: Long press the key for less than 0.5 seconds false: Time out

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetKeyClick(hand , key)

UPvr_GetTouch¶

Function name: public static bool UPvr_GetTouch (int hand,Pvr_KeyCode key)

Functions: Checking whether the key is touched

Parameter: 0/1,Pvr_KeyCode (Only TOUCHPAD, TRIGGER, Thumbrest, X and Y have Touch key values)

Return value: true: Yes; false: No

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetTouch (hand , key)

UPvr_GetTouchDown¶

Function name: public static bool UPvr_GetTouchDown (int hand,Pvr_KeyCode key)

Functions: Checking whether the key has just been touched

Parameter: 0/1,Pvr_KeyCode (Only TOUCHPAD, TRIGGER, Thumbrest, X and Y have Touch key values)

Return value: true: Yes; false: No

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetTouchDown (hand , key)

UPvr_GetTouchUp¶

Function name: public static bool UPvr_GetTouchUp (int hand,Pvr_KeyCode key)

Functions: Checking whether the key is lifted after touching

Parameter: 0/1,Pvr_KeyCode (Only TOUCHPAD, TRIGGER, Thumbrest, X and Y have Touch key values)

Return value: true: Lifted; false: Not lifted

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetTouchUp (hand , key)

UPvr_IsTouching¶

Function name: public static bool UPvr_IsTouching (int hand)

Functions: Checking whether the touchpad is touched or not(This interface is not available for Neo2 and Neo 3)

Parameter: 0/1

Return value: true: Touching false: Not touching

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_IsTouching(hand)

UPvr_GetJoystickUp¶

Function name: public static bool UPvr_GetJoystickUp(int hand)

Functions: Checking whether the Joystick key is moved upward

Parameter: 0/1

Return value: true: upward false: not upward

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetJoystickUp(hand)

UPvr_GetJoystickDown¶

Function name: public static bool UPvr_GetJoystickDown(int hand)

Functions: Checking whether the Joystick key is moved downward

Parameter: 0/1

Return value: true: downward false: not downward

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetJoystickDown(hand)

UPvr_GetJoystickLeft¶

Function name: public static bool UPvr_GetJoystickLeft(int hand)

Functions: Checking whether the Joystick key is moved left

Parameter: 0/1

Return value: true: left false: not left

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetJoystickLeft(hand)

UPvr_GetJoystickRight¶

Function name: public static bool UPvr_GetJoystickRight(int hand)

Functions: Checking whether the Joystick key is moved right

Parameter: 0/1

Return value: true: right false: not right

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetJoystickRight(hand)

UPvr_GetSwipeDirection¶

Function name: public static SwipeDirection UPvr_GetSwipeDirection(int hand)

Functions: Get the state of a sliding gesture.

Parameter: 0/1

Return value: SwipeDirection

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetSwipeDirection(hand)

UPvr_GetTouchPadPosition (Discarded. It is recommended to use the UPvr_GetAxis2D interface uniformly.)¶

Function name: public static Vector2 UPvr_GetTouchPadPosition (int hand)

Functions: Get the touch value of the touchpad

Parameter: 0/1

Return value: The touch value of the touchpad.

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetTouchPadPosition(hand)

UPvr_GetAxis1D¶

Function name: public static float UPvr_GetAxis1D(int hand, Pvr_KeyCode key)

Functions: Get the toggle value of Trigger/Grip

Parameter: 0/1,Pvr_KeyCode.TRIGGER/Pvr_KeyCode.Left/Pvr_KeyCode.Right

Return value: Range from 0 to 1

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetAxis1D (hand,key)

UPvr_GetAxis2D¶

Function name: public static Vector2 UPvr_GetAxis2D(int hand)

Functions: Get the joystick value

Parameter: 0/1

Return value: Range from -1 to 1

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetAxis2D (hand)

UPvr_GetTouchPadClick¶

Function name: public static TouchPadClick UPvr_GetTouchPadClick(int hand)

Functions: The simulation clicking function of the touchpad (divide the touchpad into 4 pieces to simulate the up and down and left and right functions of the game controller)(This interface is not available for Neo 2 and Neo 3)

Parameter: 0/1

Return value: TouchPadClick

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetTouchPadClick (hand)

UPvr_GetControllerTriggerValue¶

Function name: public static int UPvr_GetControllerTriggerValue(int hand)

Functions: Get the input value of the trigger

Parameter: 0/1

Return value: 0-255 (applicable to Neo 2 and Neo 3 )

Method of calling: Pvr_UnitySDKAPI.Controller.UPvr_GetControllerTriggerValue(hand)

UPvr_VibrateNeo2Controller¶

Function name: public void UPvr_VibrateNeo2Controller(float strength, int time, int hand)

Functions: Vibration interface of Neo 2 controller

Parameter: hand: 0,1 Vibration strength: 0-1 time:0-65535(millisecond)

Return value: None

Method of calling: Pvr_UnitySDKAPI.Controller. UPvr_VibrateNeo2Controller (float strength, int time, int hand)

UPvr_GetDeviceType¶

Function name: public static int UPvr_GetDeviceType ()

Functions: Get the connected controller type

Parameter: None

Return value: 0: No connection; 3: G2, G2 4K; 4: Neo2; 5: Neo 3

Method of calling: Pvr_UnitySDKAPI.Controller. UPvr_GetDeviceType ();

UPvr_GetControllerBindingState¶

Function name: public static int UPvr_GetControllerBindingState(int id)

Functions: get controller binding state

Parameter:int id

Return value: -1:Error 0: Unbound 1:bind

Method of calling: Pvr_UnitySDKAPI.Controller. UPvr_GetControllerBindingState(int id)

UPvr_GetAngularVelocity¶

Function name: public static Vector3 UPvr_GetAngularVelocity (int id)

Functions: get controller angular velocity

Parameter:int id (Controller ID)

Return value: angular velocity in rad/s

Method of calling: Pvr_UnitySDKAPI.Controller. UPvr_GetAngularVelocity (int id)

UPvr_GetAcceleration¶

Function name: public static Vector3 UPvr_GetAcceleration (int id)

Functions: get controller acceleration

Parameter:int id (Controller ID)

Return value: acceleration in m/s^2

Method of calling: Pvr_UnitySDKAPI.Controller. UPvr_GetAcceleration (int id)

UPvr_GetVelocity¶

Function name: public static Vector3 UPvr_GetVelocity (int id)

Functions: get controller velocity

Parameter:int id (Controller ID)

Return value: velocity in m/s

Method of calling: Pvr_UnitySDKAPI.Controller. UPvr_GetVelocity (int id)

UPvr_SetControllerOriginOffset¶

Function name: public static void UPvr_SetControllerOriginOffset(int hand, Vector3 offset)

Functions: get controller position offset

Parameter:int id (Controller ID)

Return value: Vector3 offset value in m

Method of calling: Pvr_UnitySDKAPI.Controller. UPvr_SetControllerOriginOffset(id,offset)

Note:New API in version 2.8.10

7.4 Power, volume and brightness service-related¶

UPvr_InitBatteryVolClass¶

Function name: public bool UPvr_InitBatteryVolClass()

Functions: Initialize power, volume and brightness service

Parameter: None

Return value: true: Successful false: Failure (Please call this interface to initialize before using services such as power, volume, brightness, etc.)

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_InitBatteryVolClass()

UPvr_StartAudioReceiver¶

Function name: public bool UPvr_StartAudioReceiver (string startreceiver)

Functions: Turn on volume service

Parameter: The name of gameobject that starts Audio Receiver

Return value: true: Successful false: Failure

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_StartAudioReceiver (string startreceiver)

UPvr_SetAudio¶

Function name: public void UPvr_SetAudio(string s)

Functions: Callback when volume changes

Parameter: Current volume

Return value: None

Method of calling: You can either add logic directly within this method or use delegate to call

UPvr_StopAudioReceiver¶

Function name: public bool UPvr_StopAudioReceiver ()

Functions: Turn off volume service

Parameter: None

Return value: true: Successful false: Failure

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_StopAudioReceiver()

UPvr_StartBatteryReceiver¶

Function name: public bool UPvr_StartBatteryReceiver (string startreceiver)

Functions: Turn on power service

Parameter: The name of gameobject that starts Battery Receiver

Return value: true: Successful false: Failure

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_StartBatteryReceiver(string startreceiver)

UPvr_SetBattery¶

Function name: public void UPvr_SetBattery(string s)

Functions: Callback when volume changes

Parameter: Current electric quantity (range: 0.00-1.00)

Return value: None

Method of calling: You can either add logic directly within this method or use delegate to call

UPvr_StopBatteryReceiver¶

Function name: public bool UPvr_StopBatteryReceiver ()

Functions: Turn off power service

Parameter: None

Return value: true: Successful false: Failure

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_StopBatteryReceiver()

UPvr_GetMaxVolumeNumber¶

Function name: public int UPvr_GetMaxVolumeNumber ()

Functions: Get maximum volume

Parameter: None

Return value: Maximum volume

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_GetMaxVolumeNumber ()

UPvr_GetCurrentVolumeNumber¶

Function name: public int UPvr_GetCurrentVolumeNumber ()

Functions: Get the current volume

Parameter: None

Return value: Current volume value (range is 0-15)

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_GetCurrentVolumeNumber ()

UPvr_VolumeUp¶

Function name: public bool UPvr_VolumeUp ()

Functions: Increase volume

Parameter: None

Return value: true: Successful false: Failure

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_VolumeUp ()

UPvr_VolumeDown¶

Function name: public bool UPvr_VolumeDown ()

Functions: Decrease volume

Parameter: None

Return value: true: Successful false: Failure

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_VolumeDown ()

UPvr_SetVolumeNum¶

Function name: public bool UPvr_SetVolumeNum(int volume)

Functions: Set volume

Parameter: Set volume value (volume value range is 0-15)

Return value: true: Successful false: Failure

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness. UPvr_SetVolumeNum(volume)

UPvr_GetScreenBrightnessLevel¶

Function name: public int[] UPvr_GetScreenBrightnessLevel ()

Functions: Gets the brightness level of the current screen

Parameter: None

Return value: int array. The first bit is the total brightness level supported, the second bit is the current brightness level, and it is the interval value of the brightness level from the third bit to the end bit

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_GetScreenBrightnessLevel()

UPvr_SetScreenBrightnessLevel¶

Function name: public bool UPvr_SetScreenBrightnessLevel (int vrBrightness, int level)

Functions: Set the brightness of the screen

Parameter: vrBrightness: Brightness mode; level: Brightness value (brightness level value). If vrBrightness passes in 1, level passes in brightness level; if vrBrightness passes in 0, it means that the system default brightness setting mode is adopted. Level can be set to a value between 1 and 255.

Return value: None

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_SetScreenBrightnessLevel (vrBrightness,level)

UPvr_GetCommonBrightness¶

Function name: public int UPvr_GetCommonBrightness ()

Functions: Get the brightness value of the current general device

Parameter: None

Return value: Current brightness value (brightness value range: 0-255)

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_GetCommonBrightness()

UPvr_SetCommonBrightness¶

Function name: public bool UPvr_SetCommonBrightness(int brightness)

Functions: Set the brightness value of the current general device

Parameter: Brightness value (brightness value range is 0-255)

Return value: true: Successful false: Failure

Method of calling: Pvr_UnitySDKAPI.VolumePowerBrightness.UPvr_SetCommonBrightness (brightness)

UPvr_GetHmdBatteryLevel(deprecated)¶

7.5 Head-mounted distance sensor related¶

UPvr_InitPsensor¶

Function name: public static void UPvr_InitPsensor()

Functions: Initialize the distance sensor

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI. Sensor. UPvr_InitPsensor ()

UPvr_GetPsensorState¶

Function name: public static int UPvr_GetPsensorState()

Functions: Get the status of the head-mounted distance sensor

Parameter: None

Return value: The return value is 0 when wearing a headset and it is 1 when walking away.

Method of calling: Pvr_UnitySDKAPI. Sensor. UPvr_GetPsensorState() (Note: UPvr_InitPsensor needs to be called for initialization (initialization for only once) before getting the status of Psensor)

UPvr_UnregisterPsensor¶

Function name: public static void UPvr_UnregisterPsensor ()

Functions: Release distance sensor

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI. Sensor. UPvr_UnregisterPsensor ()

7.6 Hardware device related¶

UPvr_GetDeviceMode¶

Function name: public static string UPvr_GetDeviceMode ()

Functions: Get device type

Parameter: None

Return value: SystemInfo.deviceModel

Method of calling: Pvr_UnitySDKAPI. System. UPvr_GetDeviceMode ()

UPvr_GetDeviceSN¶

Function name: public static string UPvr_GetDeviceSN ()

Functions: Get SN serial number of device

Parameter: None

Return value: Serial number SN of device

Method of calling: Pvr_UnitySDKAPI. System. UPvr_GetDeviceSN ()

UPvr_Sleep¶

Function name: public static void UPvr_Sleep()

Functions: Screen off

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI. System. UPvr_Sleep()

Note:To use the UPvr_Sleep interface, you need to add the following permissions in AndroidManifest, and you need a system signature:

android:sharedUserId="android.uid.system"

<uses-permission android:name="android.permission.DEVICE_POWER" />

UPvr_SetExtraLatencyMode¶

Function name: public static bool UPvr_SetExtraLatencyMode (ExtraLatencyMode mode)

Functions: Set current ExtraLatencyMode (only need to call once)

Parameter:

- public enum ExtraLatencyMode

- {

ExtraLatencyModeOff = 0, //Disable ExtraLatencyMode mode. This option will display the latest rendered frame for display.

ExtraLatencyModeOn = 1, //Enable ExtraLatencyMode mode. This option will display one frame prior to the latest rendered frame.

ExtraLatencyModeDynamic = 2 // Use system default setup.

}

Return value: true: Success,false: Failure

Method of calling: Pvr_UnitySDKAPI.System. UPvr_SetExtraLatencyMode (ExtraLatencyMode.ExtraLatencyModeOff);

UPvr_GetPredictedDisplayTime¶

Function name: public static float UPvr_GetPredictedDisplayTime ()

Functions: Get predicted display time delay (the interval between time of frame rendering end and time of actual display)

Parameter: None

Return value: Delay time (miliseconds)

Method of calling: Pvr_UnitySDKAPI. System. UPvr_GetPredictedDisplayTime ();

UPvr_Sleep¶

Function name: public static void UPvr_Sleep()

Functions: Turn off screen

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI. System. UPvr_Sleep()

Note that using this interface requires following Android manifest permission and system signature:

android:sharedUserId="android.uid.system"

<uses-permission android:name="android.permission.DEVICE_POWER" />

7.7 Eye Tracking related¶

UPvr_getEyeTrackingPos¶

Function name: public static Vector3 UPvr_getEyeTrackingPos ()

Functions: Get Eye Tracking Position(Only applicable on Neo 2 Eye)

Parameter: None

Return value: Current Eye Tracking Position

Method of calling: Pvr_UnitySDKAPI. System. UPvr_getEyeTrackingPos ()

UPvr_getEyeTrackingData¶

Function name: public static bool UPvr_getEyeTrackingData(ref EyeTrackingData trackingData)

Functions: Get Eye Tracking Data,include Openness etc.(Only applicable on Neo 2 Eye)

Parameter: Struct variable for receiving result

Return value: The return value is true when Succeed and it is false when failed.

Method of calling: Pvr_UnitySDKAPI. System. UPvr_getEyeTrackingData(ref trackingData)

Note: Acquired eye-tracking date is expressed in world coordinate. If position data is desired to be transformed in front of camera, a matrix transform is required. Please refer to following example code for implementation:

bool result = Pvr_UnitySDKAPI.System.UPvr_getEyeTrackingData(ref eyePoseData);

bool dataValid = (eyePoseData.combinedEyePoseStatus & (int)pvrEyePoseStatus.kGazeVectorValid) != 0;

if (result && dataValid)

{

//Init Matrix

Transform target = Pvr_UnitySDKEyeManager.Instance.transform;

Matrix4x4 mat = Matrix4x4.TRS(target.position, target.rotation, Vector3.one);

//Transform origin

eyePoseData.combinedEyeGazePoint = mat.MultiplyPoint(eyePoseData.combinedEyeGazePoint);

//Transform vector

eyePoseData.combinedEyeGazeVector = mat.MultiplyVector(eyePoseData.combinedEyeGazeVector);

}

Notes:

Definition of variables in the EyeTrackingData struct variable:

leftEyePoseStatus: confirm the pose status of the left eye

rightEyePoseStatus: confirm the pose status of the right eye

combinedEyePoseStatus: confirm the pose status of both eyes

leftEyeGazePoint: the gaze point of the left eye

rightEyeGazePoint: the gaze point of the right eye

combinedEyeGazePoint: the combined gaze point

leftEyeOpenness: openness/closeness of the left eye

rightEyeOpenness: openness/closeness of the right eye

leftEyePositionGuide: guide to the left eye position

rightEyePositionGuide: guide to the right eye position

combinedEyeGazeVector: the direction of combined gaze point

UPvr_getEyeTrackingGazeRay¶

Function name: public static bool UPvr_getEyeTrackingGazeRay(ref EyeTrackingGazeRay gazeRay)

Functions: Get Eye Tracking Origin and Eyesight direction (Only applicable on Neo 2 Eye)

Parameter: EyeTrackingGazeRay struct

Return value: true: Successful false: Failure

Method of calling: Pvr_UnitySDKAPI.System.UPvr_getEyeTrackingGazeRay(ref gazeRay)

Note: Returned eyesight data is calibrated on headset sensor. If eyesight needs to be in front of the camera when Pvr_UnitySDK moves, a Matrix transform is required. Please refer to following example code for implementation:

Pvr_UnitySDKAPI.System.UPvr_getEyeTrackingGazeRay(ref gazeRay);

if(gazeRay.IsValid)

{

//Init Matrix

Transform target = Pvr_UnitySDKManager.SDK.transform;

Matrix4x4 mat = Matrix4x4.TRS(target.position, target.rotation, Vector3.one);

//Transform ray origin

gazeRay.Origin = mat.MultiplyPoint(gazeRay.Origin);

//Transform ray direction

gazeRay.Direction = mat.MultiplyVector(gazeRay.Direction);

}

7.8 Foveated rendering related¶

GetFoveatedRenderingLevel¶

Function name: public static EFoveationLevel GetFoveatedRenderingLevel()

Functions: Get current foveated rendering level

Parameter: None

Return value: Current foveated rendering level

Method of calling: Pvr_UnitySDKAPI.Render.GetFoveatedRenderingLevel ()

SetFoveatedRenderingLevel¶

Function name: public static void SetFoveatedRenderingLevel(EFoveationLevel level)

Functions: Set foveated rendering level

Parameter: Targeted foveated rendering level with 4 levels (Low, Med, High and Top High)

Return value: None

Method of calling: Pvr_UnitySDKAPI.Render.SetFoveatedRenderingLevel(level)

SetFoveatedRenderingParameters¶

Function name: public static void SetFoveatedRenderingParameters(Vector2 ffrGainValue, float ffrAreaValue, float ffrMinimumValue)

Functions: Set current foveated rendering parameters

Parameter: Target foveated rendering parameters

ffrGainValue:Ratio of peripheral pixel scaling down in X/Y direction, larger value indicates more ratio scaling down.

ffrAreaValue: The resolution of the area around the gazing point will stay the same, and the higher the value is, the larger the clear center area gets.

ffrMinimumValue: The minimum pixels per inch by default, and the pixel per inch is equal to or bigger than ffrMinimumValue.

There are four levels provided by SDK:

| Level | ffrGainValue | ffrAreaValue | ffrMinimumValue |

| Low | (3.0f,3.0f) | 1.0f | 0.125f |

| Med | (4.0f,4.0f) | 1.0f | 0.125f |

| High | (6.0f,6.0f) | 1.0f | 0.0625f |

| Top High | (7.0f,7.0f) | 0.0f | 0.0625f |

Return value: None

Method of calling: Pvr_UnitySDKAPI. Render. SetFoveatedRenderingParameters (ffrGainValue, ffrAreaValue, ffrMinimumValue) The original interface mode has been changed. See 8.10 for the new mode.

7.9 User entitlement check related¶

Usage of user entitlement check has been changed. See Chapter 8.10 for the new method.

7.10 Safety Boundary related¶

UPvr_BoundaryGetConfigured¶

Function name: public static bool UPvr_BoundaryGetConfigured()

Functions: Return result of Safety Boundary configuring

Parameter: None

Return value: True: Succes; False: Failure

Method of calling: Pvr_UnitySDKAPI.BoundarySystem.UPvr_BoundaryGetConfigured()

UPvr_BoundaryGetEnabled¶

Function name: public static bool UPvr_BoundaryGetEnabled()

Functions: Return result of whether Safety Boundary is enabled

Parameter: None

Return value: True: Success; False: Failure

Method of calling: Pvr_UnitySDKAPI.BoundarySystem.UPvr_BoundaryGetEnabled()

UPvr_BoundarySetVisible¶

Function name: public static void UPvr_BoundarySetVisible(bool value)

Functions: Force to set Safety Boundary visible even when player stays within boundary (Note: when Safety Boundary is activated or when user configuration in system settings will overwrite this interface’s action)

Parameter: true: visible; false: invisible

Return value: None

Method of calling: Pvr_UnitySDKAPI.BoundarySystem.UPvr_BoundarySetVisible(bool)

UPvr_BoundaryGetVisible¶

Function name:public static bool UPvr BoundaryGetVisible()

Functions: Get whether the safety boundary is visible or not

Parameter: None

Return Value:Whether the safety area is visible or not

Method of calling: Pvr UnitySDKAPI. BoundarySystem. UPvr BoundaryGetVisible()

UPvr BoundaryGetDimensions¶

Function name: public static Vector 3 UPvr BoundaryGetDimensions(BoundaryType boundaryType)

Functions: Get the size of self-defined safety boundary PlayArea

Parameter: boundaryType: current boundary type, OuterBoundary or PlayArea

Return Value: Vector3, x: length of PlayArea, y:1, z: width of PlayArea. If it is the in situ safety area, V3 is (0,0,0).

Method of calling: Pvr UnitySDKAPI.BoundarySystem. UPvr_BoundaryGetDimensions(boundaryType);

UPvr_BoundaryTestNode¶

Function name:public static BoundaryTestResult UPvr_BoundaryTestNode(BoundaryTrackingNode node, BoundaryType boundaryType)

Functions: Return testing results of tracking nodes to specific boundary type. Tracking node includes HMD and controllers. And the returned results include status of boundary triggering, closest distance of tracking nodes and boundary, closest point of tracking nodes and boundary, normal of closest point

Parameter:node:tracking nodes,boundaryType:type of boundary

Return Value:BoundaryTestResult which is a struct of boundary test result

public struct BoundaryTestResult

{

public bool IsTriggering; // If boundary is triggered

public float ClosestDistance; // Minimum distance of tracking nodes and boundary

public Vector3 ClosestPoint; // Closest point of tracking nodes and boundary

public Vector3 ClosestPointNormal; // Normal of closest point

}

Method of calling: Pvr_UnitySDKAPI.BoundarySystem.UPvr_BoundaryTestNode(node, boundaryType);

UPvr_BoundaryTestPoint¶

Function name:public static BoundaryTestResult UPvr_BoundaryTestPoint(Vector3 point, BoundaryType boundaryType)

Functions:Return testing results of a tracking point to a specific boundary type. And the returned results include status of boundary triggering, closest distance of tracking nodes and boundary, closest point of tracking nodes and boundary, normal of closest point

Parameters:point:coordinate of tracking point,boundaryType:type of boundary

Return Value:BoundaryTestResult which is a struct of boundary test result

public struct BoundaryTestResult

{

public bool IsTriggering; // If boundary is triggered

public float ClosestDistance; // Minimum distance of tracking nodes and boundary

public Vector3 ClosestPoint; // Closest point of tracking nodes and boundary

public Vector3 ClosestPointNormal; // Normal of closest point

}

Method of calling: Pvr_UnitySDKAPI.BoundarySystem.UPvr_BoundaryTestPoint(point, boundaryType)

UPvr_BoundaryGetGeometry¶

Function name:public static Vector3[] UPvr_BoundaryGetGeometry(BoundaryType boundaryType)

Functions: Return the collection of boundary points

Parameter:boundaryType:type of safety boundary

Return Value:Vector3[]: Collection of safety boundary points

Method of Calling: Pvr_UnitySDKAPI. BoundarySystem.UPvr_BoundaryGetGeometry(boundaryType)

UPvr_GetDialogState¶

Function name: public static int UPvr_GetDialogState ()

Functions: Get boundary dialog state

Parameter: None

Return Value: NothingDialog = -1,GobackDialog = 0,ToofarDialog = 1,LostDialog = 2,LostNoReason = 3,LostCamera = 4,LostHighLight = 5,LostLowLight = 6,LostLowFeatureCount = 7,LostReLocation = 8

Method of Calling: Pvr_UnitySDKAPI. BoundarySystem. UPvr_GetDialogState();

7.11 SeeThrough Camera related¶

UPvr_BoundaryGetSeeThroughData¶

Function name: public static void UPvr_BoundaryGetSeeThroughData(int cameraIndex, RenderTexture renderTexture)

Functions: Get the front camera image of Neo 2 and Neo 3 series

Parameter: cameraIndex: camera id, 0 left camera, 1 right camera; renderTexture: texture rendered for camera imaging(Texture size 640*640)

Others: This interface cannot be directly called. Before calling it, camera needs to be opened. For details, please refer to PicoMobileSDKPvr_UnitySDKScenesExamplesGetSeeThroughImage.

Return Value: None

Method of Calling: Pvr_UnitySDKAPI.BoundarySystem.UPvr_BoundaryGetSeeThroughData(0, renderTexture)

UPvr_BoundarySetCameraImageRect¶

Function name:public static void UPvr_BoundarySetCameraImageRect (int width,int height)

Functions:Set the width and height of Camera image.

Parameter:width, height.

Return Value: None

Method of Calling: Pvr_UnitySDKAPI.BoundarySystem. UPvr_BoundarySetCameraImageRect (width, height)

UPvr_EnableSeeThroughManual¶

Function name: public static void UPvr_EnableSeeThroughManual(bool value)

Functions: Get the camera image of Neo 2 and set it as the environmental background

Parameter: value: whether SeeThrough is enabled or not, true enabled, false disenabled

Return Value: None

Method of Calling: Pvr_UnitySDKAPI.BoundarySystem. UPvr_EnableSeeThroughManual(true)

Others:

- Select clear flags for solid color of the prefab’s Camera (LeftEye and RightEye)

- Select 0 for the background color alpha channel of the prefab’s Camera (LeftEye and RightEye)

7.12 System related¶

Through system-related interfaces, developers can obtain and set some configuration of the system, and developers can use it as needed.

Supporting equipment:

| Devices | PUI Version |

|---|---|

| G2 4K/G2 4K E/G2 4K Plus | 4.0.3 and above |

| Neo 3 Pro/Neo 3 Pro Eye | All PUI versions |

Please check sample scene TobService.unity and script “Pvr_ToBService.cs” about how to initialize ToBService before using the interface in this chapter. “Pvr_ToBService.cs” can be directly attached to developing scene for binding service. Detailed description of interface usage is as follow:

//Initializing and bind ToBService, the objectname refers to name of the object which is used to receive callback.

private void Awake()

{

Pvr_UnitySDKAPI.ToBService.UPvr_InitToBService();

Pvr_UnitySDKAPI.ToBService.UPvr_SetUnityObjectName(objectName);

Pvr_UnitySDKAPI.ToBService.UPvr_BindToBService();

}

//Unbind the ToBService

private void OnDestory()

{

Pvr_UnitySDKAPI.ToBService.UPvr_UnBindToBService();

}

//Add 4 callback methods to allow corresponding callback can be received.

private void BoolCallback(string value)

{

if (Pvr_UnitySDKAPI.ToBService.BoolCallback != null) Pvr_UnitySDKAPI.ToBService.BoolCallback(bool.Parse(value));

Pvr_UnitySDKAPI.ToBService.BoolCallback = null;

}

private void IntCallback(string value)

{

if (Pvr_UnitySDKAPI.ToBService.IntCallback != null) Pvr_UnitySDKAPI.ToBService.IntCallback(int.Parse(value));

Pvr_UnitySDKAPI.ToBService.IntCallback = null;

}

private void LongCallback(string value)

{

if (Pvr_UnitySDKAPI.ToBService.LongCallback != null) Pvr_UnitySDKAPI.ToBService.LongCallback(int.Parse(value));

Pvr_UnitySDKAPI.ToBService.LongCallback = null;

}

private void StringCallback(string value)

{

if (Pvr_UnitySDKAPI.ToBService.StringCallback != null) Pvr_UnitySDKAPI.ToBService.StringCallback(int.Parse(value));

Pvr_UnitySDKAPI.ToBService.StringCallback = null;

}

Make sure ToBService is bound successfully before calling the interface. For callback of ToBService binding success, please add the following code to the initialization script. After TobService binding success, this method will be called.

public void toBServiceBind(string s){Debug.Log("Bind success.");}

7.12.1 General Interfaces¶

UPvr_StateGetDeviceInfo¶

Function name: public static string UPvr_StateGetDeviceInfo(PBS_SystemInfoEnum type)

Function: Get device information

Parameter: type: Get information type of the device

PBS_SystemInfoEnum.ELECTRIC_QUANTITY:Battery Power

PBS_SystemInfoEnum.PUI_VERSION:PUI Version

PBS_SystemInfoEnum.EQUIPMENT_MODEL:Device Model

PBS_SystemInfoEnum.EQUIPMENT_SN:Device SN code

PBS_SystemInfoEnum.CUSTOMER_SN:Customer SN code

PBS_SystemInfoEnum.INTERNAL_STORAGE_SPACE_OF_THE_DEVICE:Device Storage

PBS_SystemInfoEnum.DEVICE_BLUETOOTH_STATUS:Bluetooth Status

PBS_SystemInfoEnum.BLUETOOTH_NAME_CONNECTED:Bluetooth Name

PBS_SystemInfoEnum.BLUETOOTH_MAC_ADDRESS:Bluetooth MAC Address

PBS_SystemInfoEnum.DEVICE_WIFI_STATUS:Wi-Fi connection status

PBS_SystemInfoEnum.WIFI_NAME_CONNECTED:Connected Wi-Fi ID

PBS_SystemInfoEnum.WLAN_MAC_ADDRESS:WLAN MAC Adress

PBS_SystemInfoEnum.DEVICE_IP:Device IP Address

Return value: string: device information

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_StateGetDeviceInfo(PBS_SystemInfoEnum.PUI_VERSION)

UPvr_ControlSetAutoConnectWIFI¶

Function name: public static void UPvr_ControlSetAutoConnectWIFI(string ssid, string pwd,Action<bool> callback)

Function: Enable Auto-Connect to the specific WiFi on boot

Parameter: ssid: wifi name; pwd: wifi password; callback: whether WiFi is connected

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_ControlSetAutoConnectWIFI(ssid, pwd, callback)

UPvr_ControlClearAutoConnectWIFI¶

Function name: public static void UPvr_ControlClearAutoConnectWIFI(Action<bool> callback)

Function: Disable Auto-Connect to specific WiFi on boot

Parameter: callback: whether the connection is cleared

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_ControlClearAutoConnectWIFI(callback)

UPvr_PropertySetHomeKey¶

Function name: public static void UPvr_PropertySetHomeKey(PBS_HomeEventEnum eventEnum, PBS_HomeFunctionEnum function, Action<bool> callback)

Function: Set Home button press events. It will redefine the Home button and affect the system definition of it. Please use this function with discretion.

Parameter: eventEnum: single click, double click or long press events

PBS_HomeEventEnum.SINGLE_CLICK:Single Click

PBS_HomeEventEnum.DOUBLE_CLICK:Double Click

PBS_HomeEventEnum.LONG_PRESS:Long Press (Not applicable for Neo3)

function: launch setting application, return, calibration, etc..

PBS_HomeFunctionEnum.VALUE_HOME_GO_TO_SETTING:Open Settings

PBS_HomeFunctionEnum.VALUE_HOME_BACK:Back Home App (Not applicable for Neo3)

PBS_HomeFunctionEnum.VALUE_HOME_RECENTER:Recenter

PBS_HomeFunctionEnum.VALUE_HOME_OPEN_APP:Open Defined App (Not applicable for Neo3)

PBS_HomeFunctionEnum.VALUE_HOME_DISABLE:Disable Home Button

PBS_HomeFunctionEnum.VALUE_HOME_GO_TO_HOME:Back to PUI Launcher

PBS_HomeFunctionEnum.VALUE_HOME_SEND_BROADCAST:Send Home Button Broadcast (Not applicable for Neo3)

PBS_HomeFunctionEnum.VALUE_HOME_CLEAN_MEMORY:Clear Background Process (Not applicable for Neo3)

callback: whether the setting is successful

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_PropertySetHomeKey(eventEnum,function,callback)

UPvr_PropertySetHomeKeyAll¶

Function name: public static void UPvr_PropertySetHomeKeyAll(PBS_HomeEventEnum eventEnum, PBS_HomeFunctionEnum function, int timesetup, string pkg, string className, Action<bool> callback)

Function: Extended settings for Home button press

PBS_HomeEventEnum.SINGLE_CLICK:Single Click

PBS_HomeEventEnum.DOUBLE_CLICK:Double Click

PBS_HomeEventEnum.LONG_PRESS:Long Press (Not applicable for Neo3)

Parameter: eventEnum: single click, double click and long press events

function: start up the setting application, return, calibrate, etc..

PBS_HomeFunctionEnum.VALUE_HOME_GO_TO_SETTING:Open Settings

PBS_HomeFunctionEnum.VALUE_HOME_BACK:Back Home App (Not applicable for Neo3)

PBS_HomeFunctionEnum.VALUE_HOME_RECENTER:Recenter

PBS_HomeFunctionEnum.VALUE_HOME_OPEN_APP:Open Defined App (Not applicable for Neo3)

PBS_HomeFunctionEnum.VALUE_HOME_DISABLE:Disable Home Button

PBS_HomeFunctionEnum.VALUE_HOME_GO_TO_HOME:Back to PUI Launcher

PBS_HomeFunctionEnum.VALUE_HOME_SEND_BROADCAST:Send Home Button Broadcast (Not applicable for Neo3)

PBS_HomeFunctionEnum.VALUE_HOME_CLEAN_MEMORY:Clear Background Process (Not applicable for Neo3)

Timesetup: Only double click and long press events need to set interval between button presses; The system default internals are 300ms and 500ms for double-click and long-press coresspondingly. Input expected value to change double-click/long-press intervals. Enter 0 for short press.

Pkg: when function is HOME_FUNCTION_OPEN_APP enter the specific package name; enter null for other cases

className: when function is HOME_FUNCTION_OPEN_APP: enter the specific class name; enter null for other cases

callback: whether the setting is called back successfully

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_PropertySetHomeKeyAll(eventEnum,function, timesetup, pkg, className, callback)

UPvr_PropertyDisablePowerKey¶

Function name: public static void UPvr_PropertyDisablePowerKey(bool isSingleTap, bool enable,Action<int> callback)

Function: Set Power button press events

Parameter: isSingleTap : single click event [true], long press event [false], enable: the usage state of the button press

callback: Whether the callback setting is successful

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_PropertyDisablePowerKey(isSingleTap, enable,callback)

UPvr_PropertySetScreenOffDelay¶

Function name: public static void UPvr_PropertySetScreenOffDelay(PBS_ScreenOffDelayTimeEnum timeEnum,Action<int> callback)

Function: Set the screen off delay, please note that screen off timeout should not be larger than system sleep timeout.

Parameter: timeEnum: delayed time for screen off

PBS_ScreenOffDelayTimeEnum. THREE:3 Seconds

PBS_ScreenOffDelayTimeEnum. TEN:10 Seconds

PBS_ScreenOffDelayTimeEnum. THIRTY:30 Seconds

PBS_ScreenOffDelayTimeEnum. SIXTY:60 Seconds

PBS_ScreenOffDelayTimeEnum. THREE_HUNDRED:5 Minutes

PBS_ScreenOffDelayTimeEnum. SIX_HUNDRED:10 Minutes

PBS_ScreenOffDelayTimeEnum. NEVER:Never Turn Screen Off

callback: whether the setting is successful

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_PropertySetScreenOffDelay(timeEnum,callback)

UPvr_PropertySetSleepDelay¶

Function name: public static void UPvr_PropertySetSleepDelay(PBS_SleepDelayTimeEnum timeEnum)

Function: Set the system sleep delay

Parameter: timeEnum: system sleep time delay

PBS_SleepDelayTimeEnum. FIFTEEN:15 Seconds

PBS_SleepDelayTimeEnum. THIRTY:30 Seconds

PBS_SleepDelayTimeEnum. SIXTY:60 Seconds

PBS_SleepDelayTimeEnum. THREE_HUNDRED:5 Minutes

PBS_SleepDelayTimeEnum. SIX_HUNDRED:10 Minutes

PBS_SleepDelayTimeEnum. ONE_THOUSAND_AND_EIGHT_HUNDRED:30 Minutes

PBS_SleepDelayTimeEnum. NEVER:Never Sleep

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_PropertySetSleepDelay(timeEnum)

UPvr_SwitchSystemFunction¶

Function name: public static void UPvr_SwitchSystemFunction(PBS_SystemFunctionSwitchEnum systemFunction, PBS_SwitchEnum switchEnum)

Function: set configurations in system settings

Prameter: systemFunction: type of function

PBS_SystemFunctionSwitchEnum.SFS_USB:USB Debugging

PBS_SystemFunctionSwitchEnum.SFS_AUTOSLEEP:Auto Sleep

PBS_SystemFunctionSwitchEnum.SFS_SCREENON_CHARGING:Screen-On Charging

PBS_SystemFunctionSwitchEnum.SFS_OTG_CHARGING:OTG Charging

PBS_SystemFunctionSwitchEnum.SFS_RETURN_MENU_IN_2DMODE:Return Button In 2D Mode

PBS_SystemFunctionSwitchEnum.SFS_COMBINATION_KEY:Key Combination

PBS_SystemFunctionSwitchEnum.SFS_CALIBRATION_WITH_POWER_ON:Recenter/Re-Calibration On Device Boot

PBS_SystemFunctionSwitchEnum.SFS_SYSTEM_UPDATE:System Update

PBS_SystemFunctionSwitchEnum.SFS_CAST_SERVICE:Casting Service (This Property is not valid when using Pico Enterprise Solution)

PBS_SystemFunctionSwitchEnum.SFS_EYE_PROTECTION:Eye-Protection Mode

PBS_SystemFunctionSwitchEnum.SFS_SECURITY_ZONE_PERMANENTLY:Disable 6Dof Safety Boundary Permanently

PBS_SystemFunctionSwitchEnum.SFS_GLOBAL_CALIBRATION:Global Recenter/Re-Calibrate (Only available for G2 series)

PBS_SystemFunctionSwitchEnum.SFS_Auto_Calibration:Auto Recenter/Re-Calibrate

PBS_SystemFunctionSwitchEnum.SFS_USB_BOOT:USB Plug-in Boot

switchEnum: value of switch

PBS_SwitchEnum.S_ON:On

PBS_SwitchEnum.S_OFF:Off

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_SwitchSystemFunction(systemFunction,switchEnum)

UPvr_SwitchSetUsbConfigurationOption¶

Function name: public static void UPvr_SwitchSetUsbConfigurationOption(PBS_USBConfigModeEnum uSBConfigModeEnum)

Function: Set USB configuration mode (MTP, charging)

Parameter: uSBConfigModeEnum: MTP, charging

PBS_USBConfigModeEnum.MTP:MTP Mode

PBS_USBConfigModeEnum.CHARGE:Charging Mode

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_SwitchSetUsbConfigurationOption(uSBConfigModeEnum)

UPvr_AcquireWakeLock¶

Function name: public static void UPvr_AcquireWakeLock()

Functions: Acquire wakelock

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_AcquireWakeLock ();

UPvr_ReleaseWakeLock¶

Function name: public static void UPvr_ReleaseWakeLock()

Functions: Release wakelock

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_ReleaseWakeLock ();

UPvr_EnableEnterKey¶

Function name: public static void UPvr_EnableEnterKey()

Functions: Enable Confirm key

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_EnableEnterKey();

UPvr_DisableEnterKey¶

Function name: public static void UPvr_DisableEnterKey()

Functions: Disable Confirm key

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_DisableEnterKey();

UPvr_EnableVolumeKey¶

Function name: public static void UPvr_EnableVolumeKey()

Functions: Enable Volume key

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_EnableVolumeKey();

UPvr_DisableVolumeKey¶

Function name: public static void UPvr_DisableVolumeKey()

Functions: Disable Volume key

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_DisableVolumeKey();

UPvr_EnableBackKey¶

Function name: public static void UPvr_EnableBackKey()

Functions: Enable Back key

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_EnableBackKey();

UPvr_DisableBackKey¶

Function name: public static void UPvr_DisableBackKey()

Functions: Disable Back key

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_DisableBackKey();

UPvr_WriteConfigFileToDataLocal¶

Function name: public static void UPvr_WriteConfigFileToDataLocal(string path, string content, Action<bool> callback)

Functions: Write configuration file under Data/local/tmp

Parameter: path: configure file path (“/data/local/tmp/config.txt”), content: configure file content (“” ), callback: whether the configuration file has been successfully written

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_WriteConfigFileToDataLocal(string path, string content, Action<bool> callback);

UPvr_ResetAllKeyToDefault¶

Function name: public static void UPvr_ResetAllKeyToDefault (Action<bool> callback)

Functions: Reset all keys to default

Parameter: callback: whether all keys have been successfully reset to default

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_ResetAllKeyToDefault (Action<bool> callback);

UPvr_OpenMiracast (New)¶

Function name: public static void UPvr_OpenMiracast()

Functions: Turn on the screencast function.

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_OpenMiracast();

UPvr_IsMiracastOn (New)¶

Function name:public static void UPvr_IsMiracastOn()

Functions: Get the status of the screencast.

Parameter: None

Return value: true: on; false:off.

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_IsMiracastOn ();

UPvr_CloseMiracast (New)¶

Function name: public static void UPvr_CloseMiracast ()

Functions: Turn off the screencast function.

Parameter: None

Return value:None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_CloseMiracast ();

UPvr_StartScan (New)¶

Function name: public static void UPvr_StartScan()

Functions: .Start scanning for devices that can be screened

Parameter: None

Return value:None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_StartScan();

UPvr_StopScan (New)¶

Function name: public static void UPvr_StopScan()

Functions: .Stop scanning for devices that can be screened

Parameter: None

Return value:None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_StopScan();

UPvr_SetWDJsonCallback (New)¶

Function name: public static void UPvr_SetWDJsonCallback ()

Functions: .Set callback of scanning result , return a string in json format, including devices connected and scanned before.

Parameter: None

Return value:None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_SetWDJsonCallback ();

UPvr_ConnectWifiDisplay (New)¶

Function name: public static void UPvr_ConnectWifiDisplay(string modelJson)

Functions: Project to the specified device.

Parameter: modelJson

- {

“deviceAddress”: “e2:37:bf:76:33:c6”,

“deviceName”: “u5BA2u5385u7684u5C0Fu7C73u7535u89C6”,

“isAvailable”: “true”,

“canConnect”: “true”,

“isRemembered”: “false”,

“statusCode”: “-1”,

“status”: “”,

“description”: “”

}

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_ConnectWifiDisplay(string modelJson);

UPvr_DisConnectWifiDisplay (New)¶

Function name: public static void UPvr_DisConnectWifiDisplay ()

Functions: Disconnected for screencast.

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_DisConnectWifiDisplay ();

UPvr_ForgetWifiDisplay (New)¶

Function name: public static void UPvr_ForgetWifiDisplay(string address)

Functions: Forget the devices that have been connected.

Parameter: adress: the adress of the device.

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_ForgetWifiDisplay(address);

UPvr_RenameWifiDisplay (New)¶

Function name: public static void UPvr_RenameWifiDisplay(string address, string newName)

Functions: Modify the name of the connected device (only the name for local storage)

Parameter: adress: the adress of the device; newName: target name of the device.

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_RenameWifiDisplay(string address, string newName);

UPvr_UpdateWifiDisplays (New)¶

Function name: public static void UPvr_UpdateWifiDisplays(Action<string>callback)

Functions: Manually update the list.

Parameter: callback: the result of the SetWDJsonCallback.

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_UpdateWifiDisplays(callback);

UPvr_GetConnectedWD (New)¶

Function name: public static void UPvr_GetConnectedWD()

Functions: Get the information of the currently connected device.

Parameter: None.

Return value: Return the currently connected device information.

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_GetConnectedWD();

7.12.2 Protected interfaces¶

Note: To secure system steadiness and user experiences, application which uses following interfaces are not allowed to publish on Pico Store. It’s required to add the label to AndroidManifest as below to use these interfaces:

<meta-data android:name="pico_advance_interface" android:value="0"/>

UPvr_ControlSetDeviceAction¶

Function name: Pvr_UnitySDKAPI.ToBService. UPvr_ControlSetDeviceAction(PBS_DeviceControlEnum deviceControl,Action<int> callback)

Function: deviceControl: Control the device to power off or reboot

Parameter: deviceControl: control the device to power off or reboot

PBS_DeviceControlEnum.DEVICE_CONTROL_REBOOT:Reboot

PBS_DeviceControlEnum.DEVICE_CONTROL_SHUTDOWN:Power Off

callback: callback interface, whether power off/reboot is successful

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_ControlSetDeviceAction(PBS_DeviceControlEnum.DEVICE_CONTROL_SHUTDOWN,callback)

UPvr_ControlAPPManger¶

Function name: public static void UPvr_ControlAPPManger(PBS_PackageControlEnum packageControl, string path, Action<int> callback)

Function: Silent install/uninstall application

Parameter:

packageControl: Silent install/uninstall application

PBS_PackageControlEnum.PACKAGE_SILENCE_INSTALL:Install

PBS_PackageControlEnum.PACKAGE_SILENCE_UNINSTALL:Uninstall

path: apk path of the application to install/package name of the application to uninstall

callback: calling back interfaces, whether the install/uninstall is successful

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService.UPvr_ControlAPPManger(PBS_PackageControlEnum.PACKAGE_SILENCE_UNINSTALL, “com.xxx.xxx”,callback)

UPvr_ScreenOn¶

Function name: public static void UPvr_ScreenOn()

Function: Turn screen on

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_ScreenOn();

UPvr_ScreenOff¶

Function name: public static void UPvr_ScreenOff()

Function: Turn screen off

Parameter: None

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_ScreenOff();

UPvr_SetAPPAsHome¶

Function name: public static void UPvr_SetAPPAsHome(PBS_SwitchEnum switchEnum, string packageName)

Function: Set desired application as Kiosk Mode launcher

Parameter: switchEnum: switch on/off,packageName: package name of application to set as launcher

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_SetAPPAsHome(switchEnum,packageName);

UPvr_KillAppsByPidOrPackageName (New)¶

Function name: public static void UPvr_KillAppsByPidOrPackageName(int[] pids, string[] packageNames)

Function: Kill the application by passing in the application pid or package name.

Parameter: pid: an array of application pid,packageNames: an array of package names.

Return value: None

Method of calling: Pvr_UnitySDKAPI.ToBService. UPvr_KillAppsByPidOrPackageName(pids, packageNames);

7.13 Achievement system related¶

7.13.1 Preparations¶

To access the Achievement system, developers need to create the application on Pico Developer Platform and obtain APP ID. Steps:

- Log on to Pico Developer Platform (https://developer.pico-interactive.com/) and sign up for a Pico membership

- Apply to be a developer

Developers are divided into individual developers and enterprise developers, please apply according to the actual situation. After submission, we will give feedback within 3 working days. Please check the status in time.

- View Merchant ID

- Create Achievement

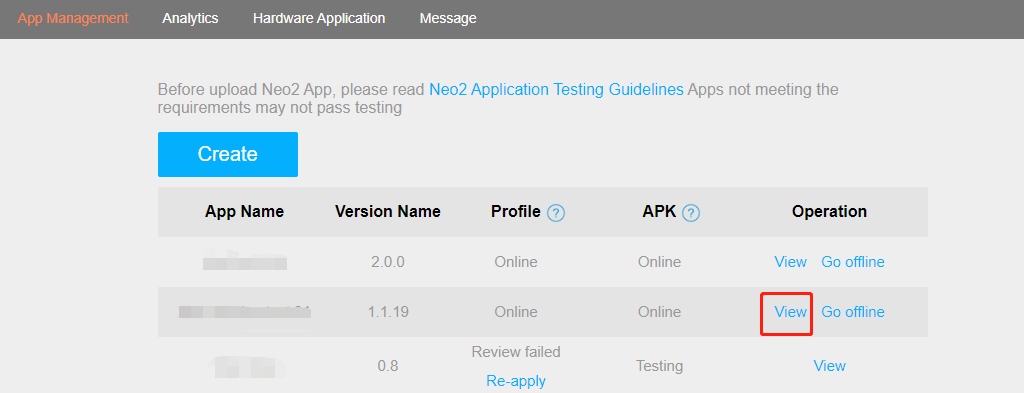

(1). Click “View” for more operations. Creating first if you don’t have any App. After successful creation, the platform will assign an unique APP ID automatically.

Figure 7.13.1 Pico Developer Platform

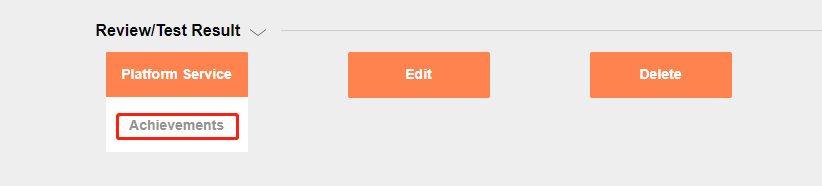

(2). Click the button of “Platform Service - Achievements” at the bottom of the View page.

Figure 7.13.2 Pico Developer Platform

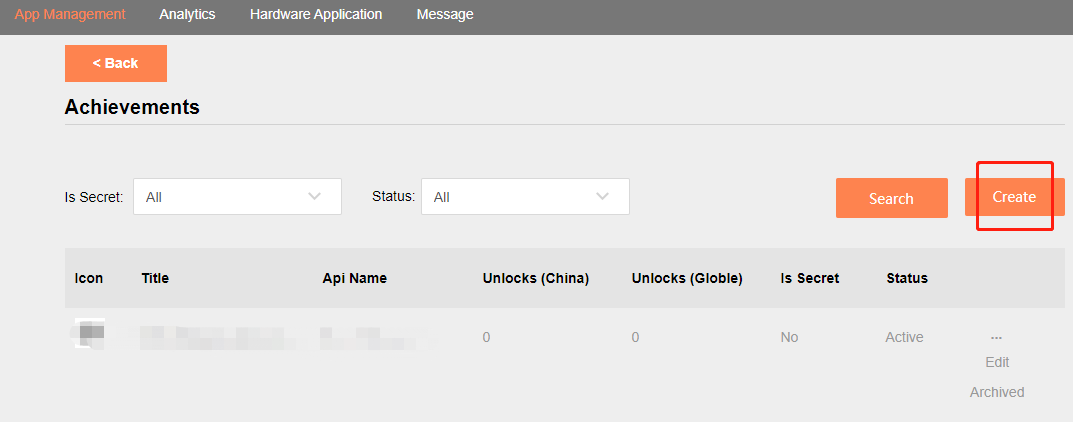

(3). Checking the list of achievements you have created. Click “Create” if you don’t have any achievement.

Figure 7.13.3 Pico Developer Platform

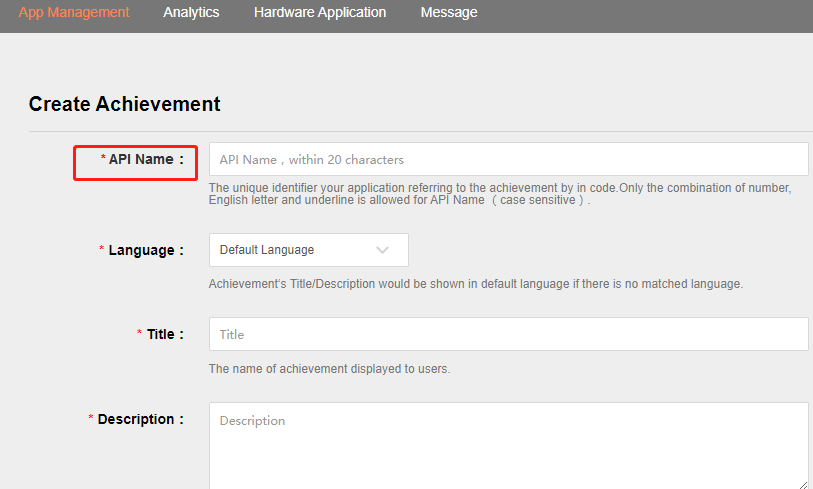

(4). Fill in the fields of your achievement. Write the same API Name of that in your coding.

- Achievement types:

(1). Simple: Unlock by completing a single event or target without achievement progress

(2). Counter: Unlock when the specified number of targets is reached (e.g. reach 5 targets to unlock, Target = 5)

(3). Bitfield: Unlock when the specified number of targets in the specified range is reached (e.g. reach 3 out of 7 targets to unlock, Target = 3, Bitfield Length = 7)

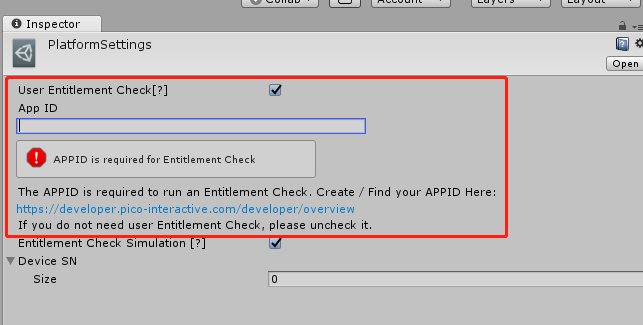

- Platform Settings configuration application APPID

(1). Configure a valid APP ID in the project Platform Settings and Manifest.xml:

Figure 7.13.4 Pico Developer Platform

(2). See Chapter 9.1.1 and Chapter 9.1.2 for detailed AndroidManifest configuration.

See Chapter 9.1.1 and Chapter 9.1.2 for detailed AndroidManifest configuration.

7.13.2 Calling interfaces¶

Achievements.Init¶

Function name: public static Pvr_Request<Pvr_AchievementUpdate> Init()

Function: Achievement system initialization

Parameter: None

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements.Init()

Achievements.GetAllDefinitions¶

Function name: public static Pvr_Request<Pvr_AchievementDefinitionList> GetAllDefinitions()

Function: Get all definitions of achievements

Parameter: None

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements. GetAllDefinitions ()

Achievements.GetAllProgress¶

Function name: public static Pvr_Request<Pvr_AchievementProgressList> GetAllProgress()

Function: Get progress of all modified achievements

Parameter: None

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements. GetAllProgress ()

Achievements.GetDefinitionsByName¶

Function name: public static Pvr_Request<Pvr_AchievementDefinitionList> GetDefinitionsByName(string[] names)

Function: Get definitions by name

Parameter: names: get the achievement apiname for obtaining definitions

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements. GetDefinitionsByName (names)

Achievements.GetProgressByName¶

Function name: public static Pvr_Request<Pvr_AchievementProgressList> GetProgressByName(string[] names)

Function: Get achievement progress by name

Parameter: names: the achievement apiname for obtaining definitions

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements. GetProgressByName (names)

Achievements.AddCount¶

Function name: public static Pvr_Request<Pvr_AchievementUpdate> AddCount(string name, long count)

Function: Add count achievements

Parameter: name: achievement apiname,count: additions

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements. AddCount (name,count)

Achievements.AddFields¶

Function name: public static Pvr_Request<Pvr_AchievementUpdate> AddFields(string name, string fields)

Function: Add bitfield achievements

Parameter: name: achievement apiname, fields: additions

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements. AddFields (name,bitfield)

Achievements.Unlock¶

Function name: public static Pvr_Request<Pvr_AchievementUpdate> Unlock(string name)

Function: Unlock achievement according to apiname

Parameter: name: achievement apiname

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements. Unlock (name)

Achievements.GetNextAchievementDefinitionListPage¶

Function name: public static Pvr_Request<Pvr_AchievementDefinitionList> GetNextAchievementDefinitionListPage (Pvr_AchievementDefinitionList list)

Function: When getting all the achievement definitions, if there are multiple pages, get the next page

Parameter: None

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements. GetNextAchievementDefinitionListPage(achievementDefinitionList)

Achievements. GetNextAchievementProgressListPage¶

Function name: public static Pvr_Request<Pvr_AchievementProgressList> GetNextAchievementProgressListPage(Pvr_AchievementProgressList list)

Function: When getting all modified achievement progress information, if there are multiple pages, get the next page

Parameter: None

Return value: Result of calling

Method of calling: Pvr_UnitySDKAPI. Achievements. GetNextAchievementProgressListPage(achievementProgressList)

7.13.3 Notes¶

(1). Please log in to the device with Pico account first, and then use the achievement interface and functions

(2). To avoid errors in achievement data caused by account switching and logout, please call initialization again in App Resume() to ensure that the App gets the correct user login information.

(3). At the development and debugging phase, the following method can be adopted to debug and test

- Logcat

- View the data from the Developer Platform - Apps - Achievements page